About

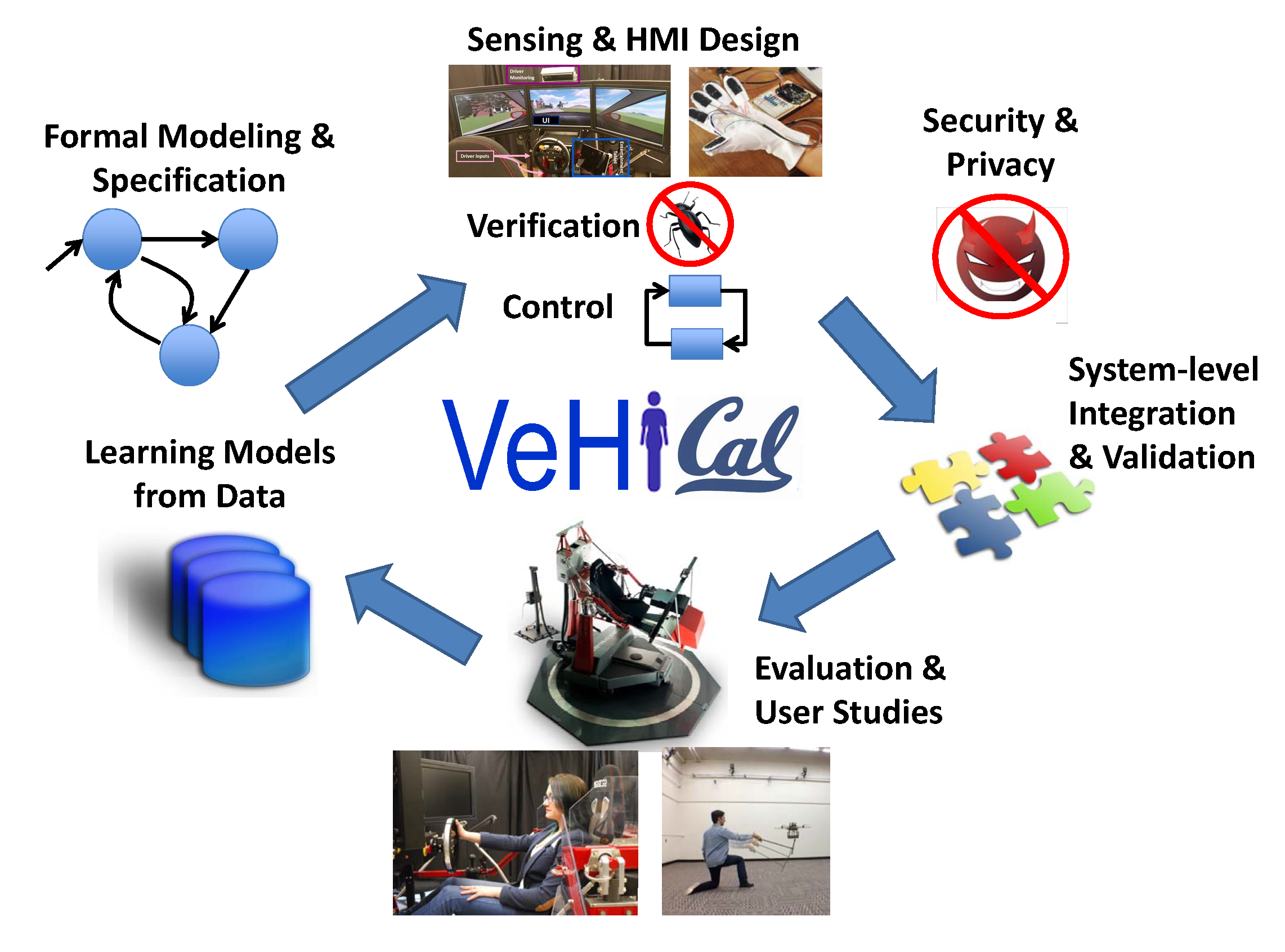

VeHICaL is an NSF Cyber-Physical Systems (CPS) Frontier project that is developing the foundations of verified co-design of interfaces and control for human cyber-physical systems (h-CPS) --- cyber-physical systems that operate in concert with human operators. VeHICaL aims to bring a formal approach to designing both interfaces and control for h-CPS, with provable guarantees. The VeHICaL approach is bringing a conceptual shift of focus away from separately addressing the design of control systems and human-machine interaction and towards the joint co-design of human interfaces and control using common modeling formalisms and requirements on the entire system. This co-design approach is making novel intellectual contributions to the areas of formal methods, control theory, sensing and perception, cognitive science, security and privacy, and human-machine interfaces.

The foundational work being pursued in the VeHICaL project is being validated in two application domains: semi-autonomous ground vehicles that interact with humans inside and outside the vehicle, and semi-autonomous aerial vehicles (drones) that interact with human operators and humans in the environment.

Overview of the VeHICaL Approach

People

Principal Investigators

University of California, Berkeley

Caltech

UNC-Chapel Hill

News

Events

- Sept 27, 2016: VeHICaL Project Kickoff Meeting: Agenda

- Oct 31, 2016: NSF CPS PI Meeting: Overview Presentation (PDF)

- May 9, 2017: VeHICaL industry workshop

- Sept 19, 2017: VeHICaL 1st Annual Meeting:Overview Presentation

- Oct 4, 2018: VeHICaL 2nd Annual Meeting:Overview Presentation

- Oct 3-4, 2019: Vehical 3rd Annual Meeting: (Agenda + Slides)

- Nov 21, 2019: NSF CPS PI Meeting: 15-min Overview Presentation (PDF)

Publications

(This is a partial list --- for other publications, please see the websites of the project PIs)- Erickson R. Nascimento, Ruzena Bajcsy, Michal Gregor, Isabella Huang, Ismael Villegas, Gregorij Kurillo. On the Development of an Acoustic-Driven Method to Improve Driver's Comfort Based on Deep Reinforcement Learning. In IEEE Transactions on Intelligent Transportation Systems, March 2020.

- Tommaso Dreossi, Daniel J. Fremont, Shromona Ghosh, Edward Kim, Hadi Ravanbakhsh, Marcell Vazquez-Chanlatte, and Sanjit A. Seshia. VerifAI: A Toolkit for the Formal Design and Analysis of Artificial Intelligence-Based Systems. In 31st International Conference on Computer Aided Verification (CAV), July 2019.

- Daniel J. Fremont, Tommaso Dreossi, Shromona Ghosh, Xiangyu Yue, Alberto L. Sangiovanni-Vincentelli, and Sanjit A. Seshia. Scenic: A Language for Scenario Specification and Scene Generation. In Proceedings of the 40th annual ACM SIGPLAN conference on Programming Language Design and Implementation (PLDI), June 2019.

- Tommaso Dreossi, Shromona Ghosh, Alberto L. Sangiovanni-Vincentelli, and Sanjit A. Seshia. A Formalization of Robustness for Deep Neural Networks. In Proceedings of the AAAI Spring Symposium Workshop on Verification of Neural Networks (VNN), March 2019.

- Ankush Desai, Shromona Ghosh, Sanjit A. Seshia, Natarajan Shankar, and Ashish Tiwari. A Runtime Assurance Framework for Programming Safe Robotics Systems. In IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), June 2019.

- Marcell Vazquez-Chanlatte, Susmit Jha, Ashish Tiwari, Mark K. Ho, and Sanjit A. Seshia. Learning Task Specifications from Demonstrations. In Advances in Neural Information Processing Systems 31: Annual Conference on Neural Information Processing Systems (NeurIPS), pp. 5372–5382, December 2018.

- Marcell Vazquez-Chanlatte, Shromona Ghosh, Jyotirmoy V. Deshmukh, Alberto L. Sangiovanni-Vincentelli, and Sanjit A. Seshia. Time-Series Learning Using Monotonic Logical Properties. In 18th International Conference on Runtime Verification (RV), pp. 389–405, November 2018.

- Sanjit A. Seshia, Ankush Desai, Tommaso Dreossi, Daniel Fremont, Shromona Ghosh, Edward Kim, Sumukh Shivakumar, Marcell Vazquez-Chanlatte, and Xiangyu Yue. Formal Specification for Deep Neural Networks. In Proceedings of the International Symposium on Automated Technology for Verification and Analysis (ATVA), pp. 20–34, October 2018.

- Tommaso Dreossi, Somesh Jha, and Sanjit A. Seshia. Semantic Adversarial Deep Learning. In 30th International Conference on Computer Aided Verification (CAV), 2018.

- Marcell Vazquez-Chanlatte, Shromona Ghosh, Vasumathi Raman, Alberto Sangiovanni-Vincentelli, and Sanjit A. Seshia. Generating Dominant Strategies for Continuous Two-Player Zero-Sum Games. In IFAC Conference on Analysis and Design of Hybrid Systems (ADHS), 2018.

- Ankush Desai, Amar Phanishayee, Shaz Qadeer, and Sanjit A. Seshia. Compositional Programming and Testing of Dynamic Distributed Systems. In Proceedings of the ACM on Programming Languages (PACMPL) -- OOPSLA, 2018.

- Using a Driver's Eye Data to Predict Accident-Causing Drowsiness Levels. Alyssa Byrnes and Cynthia Sturton. The 21st IEEE International Conference on Intelligent Transportation Systems, November 2018. Drowsy Driver Dataset.

- HindSight: Enhancing Spatial Awareness by Sonifying Detected Objects in Real-Time 360-Degree Video. Eldon Schoop, James Smith, and Bjoern Hartmann. 2018. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems (CHI '18)

- Vazquez-Chanlatte, Marcell, et al. "Logical Clustering and Learning for Time-Series Data." International Conference on Computer Aided Verification. Springer, Cham, 2017.

- Active Preference-Based Learning of Reward Functions Dorsa Sadigh, Anca Dragan, S. Shankar Sastry, Sanjit A. Seshia Robotics: Science and Systems (RSS), July 2017.

- Stochastic Predictive Freeway Ramp Metering from Signal Temporal Logic Specifications Negar Mehr, Dorsa Sadigh, Roberto Horowitz, S. Shankar Sastry, Sanjit A. Seshia 2017 American Control Conference (ACC), May 2017.

- Information Gathering Actions over Human Internal State Dorsa Sadigh, S. Shankar Sastry, Sanjit A. Seshia, Anca Dragan IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), October 2016.

- Towards Trustworthy Automation: User Interfaces that Convey Internal and External Awareness Tara Rezvani, Katherine Driggs-Campbell, Dorsa Sadigh, S. Shankar Sastry, Sanjit A. Seshia, Ruzena Bajcsy IEEE Intelligent Transportation Systems Conference (ITSC), November 2016.

- Safe and Interactive Autonomy: Control, Learning, and Verification. Ph.D. Dissertation, EECS Department, University of California, Berkeley, 2017.

- Systematic Testing of Convolutional Neural Networks for Autonomous Driving T. Dreossi, S. Ghosh, A. Sangiovanni-Vincentelli, S. A. Seshia Reliable Machine Learning in the Wild (RMLW 2017)

- Compositional Falsification of Cyber-Physical Systems with Machine Learning Components T. Dreossi, A. Donzé, S. A. Seshia NASA Formal Methods (NFM 2017)

Software

- VerifAI: A software toolkit for the formal design and analysis of systems that include artificial intelligence (AI) and machine learning (ML) components. link

- Scenic: A compiler and scene generator for the Scenic scenario description language, with interfaces to simulators (including the Grand Theft Auto V and Webots simulators). link

Datasets

- The Visual-Acoustic Vehicle Dataset is a set of multimodal data collected from a Lincoln MKS car including vehicle sensors information, 360º image data collected from eight cameras on top of the car, and LiDAR information. Visual-Acoustic Vehicle Dataset.